Arm Motion Tracking

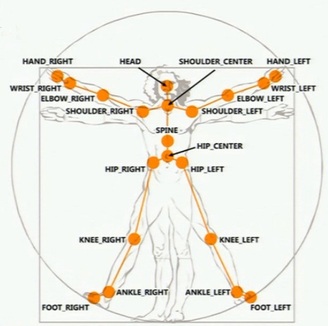

The arm motion of the user is tracked by tracking the hand motion of the user. This is a two-stage process because both the orientation and the position of the hand has to be tracked. For tracking the hand position, the Microsoft Kinect was used. The Kinect sensor is able to track the position of up to 20 joints of a person inside the field of view of the sensor. These 20 joints are shown in the following figure:

As can be seen in the figure above, the middle of the palms of the hands are two of the joints which are tracked by the Kinect. With a referesh rate of 30Hz and a cost of 150 CAD, the Kinect sensor provides a cheap solution for tracking of the position of the operator's hand.

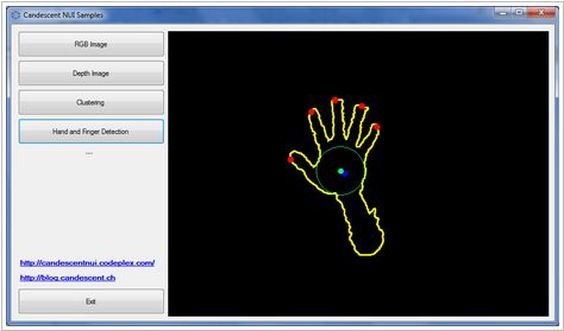

Since the Microsoft Kinect only provides position data, other alternatives had to be considered for tracking the rotational motion of the operator's hand. One of the initial orientation tracking solutions that was investigated was a Kinect-based image processing solution for detecting the fingers of the human hand. This open source solution called Candescent NUI would allow us to detect the orientation of the operator's hand through observed changes in the position of each of the fingers. The following figure shows a snapshot of the Candescent NUI application:

Since the Microsoft Kinect only provides position data, other alternatives had to be considered for tracking the rotational motion of the operator's hand. One of the initial orientation tracking solutions that was investigated was a Kinect-based image processing solution for detecting the fingers of the human hand. This open source solution called Candescent NUI would allow us to detect the orientation of the operator's hand through observed changes in the position of each of the fingers. The following figure shows a snapshot of the Candescent NUI application:

During testing of the Candescent NUI application, the following problems were discovered:

- The operational range of the solution is only 90 – 100 cm (from the base of the Kinect sensor).

- The fingers can only be detected if the hand is exactly parallel to the face of the Kinect sensor.

- The solution is not reliable at all. It is very susceptible to noise from the background.

Due to the aforementioned problems, the Candescent NUI solution was rejected as an adequate orientation tracking solution.

- The operational range of the solution is only 90 – 100 cm (from the base of the Kinect sensor).

- The fingers can only be detected if the hand is exactly parallel to the face of the Kinect sensor.

- The solution is not reliable at all. It is very susceptible to noise from the background.

Due to the aforementioned problems, the Candescent NUI solution was rejected as an adequate orientation tracking solution.

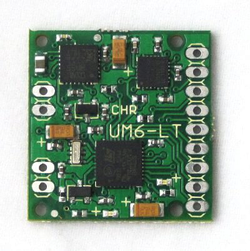

IMU

While the Kinect camera provides excellent tracking of the controller's palm, information regarding rotation of the wrist (pitch, roll, yaw) is not adequate for precise control of the end effector of the robotic arm. As such, our solution employs an Inertial Measurement Unit (IMU) that sits on top of the operator's wrist as part of a glove assembly. This IMU is able to track roll, pitch and yaw of the operator's arm and, along with the position information from the Kinect, allows for control of the robotic arm's end effector in all of its degrees of freedom.